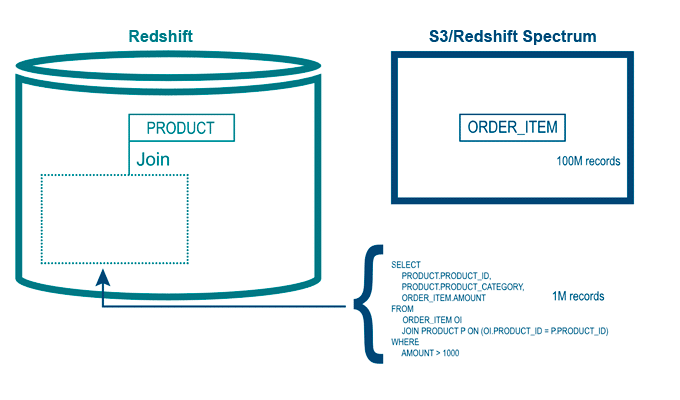

Redshift also doubled the managed storage quota to 128 TB per node. Amazon is keen on migrating existing customers on the legacy Redshift instances to RA3 instances so that the users can get the most out of the latest developments in Redshift.Ĭontinuing with the progress on RA3 instance types, earlier in 2021, Amazon launched RA3.16xlarge and RA3.4xlarge instance types for GovCloud (US) regions and RA3.xlplus in all AWS regions. Redshift’s RA3 type instances were launched to challenge Snowflake and are built on the same philosophy of decoupling storage and compute. These features are enabled by the next generation of Redshift instances that work on the AWS Nitro System. Similar to cross-database queries, data sharing is also available only on RA3 type instances. Users can securely share data with other clusters at different levels, including schemas, tables, functions, and so on. Data sharing, which was in preview since late 2020, enables Redshift users to instantaneously share data between clusters without having to copy or move data from one cluster to another. Before Redshift’s data sharing feature, users would copy the data from one Redshift cluster to another. Similar to database separation, many organizations use separate Redshift clusters depending on various business factors like billing, cluster maintenance, security and compliance issues, etc. Although now GA, there are some limitations to cross-database queries that do not include creating views on top of them. Users with clusters running on non-RA3 type instances have an easy option to migrate to RA3 type instances to use cross-database querying and other such features. Users need to copy one of the two databases to S3 and run a Redshift Spectrum federated query for the other type of instances. Users could not query two or more different databases in a single query before cross-database querying was introduced in preview by Amazon in late 2020.Ĭross-database querying is only available for RA3 type instances. Usually, these databases are required to be queried together for integration and testing purposes. Moreover, users also create separate databases for different stages of ETL processes. Redshift users often create several databases separating business concerns, development environments, maturity, etc. In this recipe, we will create a sample schema that will be. Organizing database objects in a schema is good for security monitoring and also logically groups the objects within a cluster. However, this is very dependent on which bench you use and having all users' benches configured correctly.īottom line - if you want something to modify your SQL do if before it goes to Redshift.Users of Amazon Redshift can now run cross-database queries and share data across Redshift clusters as AWS released these enhancements to general availability. In Amazon Redshift, a schema is a namespace that groups database objects such as tables, views, stored procedures, and so on. Many benches support variable substitution and simple replacements in the SQL can be done by the bench. However, I expect it is unlikely that you are looking to move to an API access model.

If you use Redshift data-api you could put a Lambda function in series which performs the SQL modifications you desire (but make sure you get your API permissions right). This will do what you want but you will need a computer to perform this work. The one I've used in the past is pgbounce-rr which pools connections to the the db but also allow for modifications to the SQL before being sent on. The most complete way is to use a front-end system that clients connect to and then this system in turn connects to the db.

So I'm going to focus on ways to do this before the database. But first trying to use a database engine for functions beyond querying the database is a waste of horsepower and the road to db lock-in. There are a few ways you try to attack this.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed